Technologies Used

1. Executive Summary

Video streaming platforms succeed or fail based on one metric: viewer experience under pressure.

For OTT providers delivering live television and premium on-demand content, performance during peak concurrency moments—live sports events, breaking news, or prime-time entertainment—can determine whether subscribers remain loyal or abandon the platform.

A rapidly growing IPTV provider approached Boffin Coders after their platform began showing signs of structural failure as their audience scaled internationally.

Despite strong subscriber acquisition, the platform faced severe operational challenges:

• Streams buffering during peak hours • Platform instability during live sports broadcasts • Slow start times on mobile devices • Escalating CDN costs • Architecture incapable of scaling with demand

These failures had measurable business consequences.

User churn began rising. App store reviews dropped below acceptable thresholds. Subscriber growth slowed dramatically.

The underlying issue was not simply performance tuning—it was an architecture designed for thousands of viewers attempting to serve hundreds of thousands.

Our task was not merely to optimize the system.

It was to re-engineer the streaming platform into a resilient, globally scalable OTT infrastructure capable of supporting 500K + concurrent viewers without compromising video quality or user experience.

2. The Client Context

The client is a fast-growing IPTV provider distributing:

- Live TV channels

- International sports broadcasts

- Movies and TV series

- Video-on-demand content

Their service operates across multiple countries, serving viewers on:

- Smart TVs

- Web platforms

- Android devices

- iOS devices

The business model relies heavily on subscription revenue, where retention depends almost entirely on streaming reliability.

While the platform performed adequately during early growth stages, the business quickly encountered a classic challenge faced by many OTT startups:

Audience growth outpaced infrastructure maturity.

What initially worked for 20,000 viewers began collapsing under 200,000+ viewers during live broadcasts.

Live sports events became the most critical failure points.

During high-traffic moments, the platform exhibited:

- Sudden spikes in buffering

- Slow stream initialization

- Backend service crashes

- Delayed playback synchronization

From the user’s perspective, this translated into a simple but damaging experience:

The stream didn't work when it mattered most.

And in OTT platforms, failure during live events is often unrecoverable from a brand perspective.

3. The Diagnostic Phase

Before proposing any solution, our team conducted a deep diagnostic assessment of the entire streaming ecosystem.

Rather than focusing on isolated symptoms—buffering or crashes—we evaluated the entire video delivery pipeline, from ingestion to playback.

The investigation examined five core layers:

1. Video Encoding & Packaging

We evaluated how live video streams were being encoded and segmented for distribution.

Key questions included:

• Were streams properly optimized for adaptive bitrate delivery?• Was segment generation creating latency bottlenecks?• Was the encoding pipeline scalable?

2. Streaming Protocol & Player Performance

We assessed the HLS streaming implementation and playback performance across devices.

Particular attention was given to:

• Initial stream startup latency • Adaptive bitrate switching behavior • Mobile playback efficiency under poor network conditions

3. Backend Service Architecture

The backend infrastructure was analyzed for:

• API request load capacity • Session authentication performance • Content metadata retrieval latency • Horizontal scalability of core services

This layer proved especially critical during peak traffic spikes.

4. CDN Distribution Efficiency

The CDN architecture was examined to understand:

• Cache hit ratios • Geographic latency patterns • Origin request spikes • Cost inefficiencies in content delivery

While the platform relied on a globally recognized CDN provider, the distribution strategy itself was poorly optimized.

5. Observability & Monitoring

Perhaps the most revealing discovery was the lack of true system observability.

The platform had minimal insight into:

• Real-time viewer concurrency • streaming failures • Segment delivery latency • Infrastructure stress points

Without proper monitoring, the engineering team was effectively reacting to failures after users experienced them.

4. Root Cause Analysis of Streaming Failures

After completing the diagnostic phase, several systemic issues became clear.

The performance failures were not the result of a single bottleneck.

They were the result of architectural misalignment across the entire streaming pipeline.

Root Cause 1: Monolithic Backend Architecture

The existing backend relied on a monolithic service structure, where authentication, metadata retrieval, and streaming session management were tightly coupled.

Under high load, this architecture created cascading failures:

• Authentication delays slowed stream access • Metadata queries blocked playback initialization • Backend services crashed under concurrency spikes

This structure fundamentally limited horizontal scalability.

Root Cause 2: Inefficient Video Packaging

Live streams were being processed with suboptimal segment durations and encoding profiles, which increased buffering probability under fluctuating network conditions.

Longer segments meant:

- Slower adaptive bitrate switching

- Delayed playback recovery after packet loss

- Increased perceived buffering

Root Cause 3: Poor CDN Cache Strategy

The CDN configuration failed to fully leverage edge caching efficiency.

Instead of maximizing edge delivery, frequent requests were hitting the origin servers, causing:

- Origin overload during peak events

- Increased latency for viewers

- Innecessary CDN cost spikes

Root Cause 4: Lack of Adaptive Streaming Optimization

The player lacked sophisticated adaptive bitrate strategies optimized for:

- Mobile networks

- Fluctuating bandwidth conditions

- Regional network variability

This was the primary driver behind poor mobile streaming performance.

Root Cause 5: Absence of Real-Time System Visibility

Without proper monitoring tools, the engineering team had no early warning signals for infrastructure stress.

By the time alerts were triggered, the user experience had already degraded.

This meant that even minor traffic surges could escalate into full platform instability.

Closing Insight of the Diagnostic Phase

The conclusion was unavoidable.

The platform’s issues were not simply operational—they were architectural.

The system had been designed for linear growth, while the business had entered exponential demand territory.

Solving this required a complete rethinking of the platform’s architecture:

- Microservice-based backend services

- Optimized HLS streaming pipelines

- Global CDN distribution strategy

- Secure tokenized streaming

- Real-time observability infrastructure

Only by redesigning the streaming platform from the ground up could the system support hundreds of thousands of concurrent viewers without compromising performance.

PART 2 — The Architecture

5. Solution Strategy

After completing the diagnostic phase, one reality became clear: the platform’s performance issues were structural rather than incidental. Incremental improvements such as increasing server capacity or optimizing a few database queries would only delay the inevitable. The existing architecture was not designed to support the level of concurrency the business was rapidly approaching.

To solve this problem sustainably, the platform needed to be re-architected from the ground up with scalability, resilience, and operational predictability at its core.

Our solution strategy focused on five architectural principles.

Horizontal Scalability

The previous infrastructure relied heavily on vertically scaled servers. While this approach can work in early stages, it becomes inefficient and unstable under heavy load.

The redesigned system introduced a microservices-based architecture capable of horizontal scaling. Instead of depending on a few large servers, the platform distributes workloads across multiple independent services that can scale automatically during demand spikes.

This architecture allows the system to dynamically allocate resources during high-traffic events such as live sports broadcasts, ensuring uninterrupted performance even during sudden traffic surges.

Decoupled Video Delivery Pipeline

One of the most critical architectural changes involved separating the video delivery pipeline from core backend services.

Previously, delays in authentication or metadata APIs could affect video playback performance. To eliminate this dependency, the new architecture separated key platform components:

- authentication services

- Metadata and content catalog services

- streaming session management

- Video delivery infrastructure

By decoupling these layers, video playback remains stable even if backend APIs experience increased load. This separation significantly improves platform resilience.

Edge-Optimized Global Distribution

Video streaming performance is fundamentally determined by network proximity and efficient content distribution.

To address this, we implemented a global CDN distribution strategy designed to:

- Maximize edge caching efficiency

- Minimize origin server requests

- Reduce viewer latency across geographic regions

By ensuring that video segments are served from the nearest edge node, the platform significantly improved playback speed while also reducing infrastructure costs.

Adaptive Streaming Optimization

Users access streaming platforms across a wide range of network environments. Mobile networks in particular introduce unpredictable fluctuations in bandwidth.

To address this challenge, we implemented optimized HLS adaptive bitrate streaming.

This approach dynamically adjusts video quality based on real-time network conditions. When bandwidth drops, the player automatically switches to a lower bitrate stream to maintain uninterrupted playback. When bandwidth improves, the player upgrades quality seamlessly.

This optimization is essential for delivering consistent streaming experiences across devices and network conditions.

Real-Time Observability

Operating a large-scale streaming platform requires complete visibility into system performance.

We implemented an observability framework capable of monitoring critical operational metrics such as:

- Real-time viewer concurrency

- Video segment delivery latency

- API error rates

- CDN performance metrics

This monitoring infrastructure allows engineering teams to detect anomalies early and resolve issues before they impact end users.

6. Why This Tech Stack Was Selected

The technology stack for the platform was chosen with three priorities in mind: performance, scalability, and operational reliability.

Backend — Node.js Microservices

Node.js was selected as the core backend framework due to its event-driven and non-blocking architecture, which makes it well suited for applications requiring high concurrency.

Key benefits include:

- Efficient handling of simultaneous connections

- Lightweight service containers

- Last API response times

- Strong scalability under heavy traffic

The backend was divided into independent microservices, including:

- Authentication service

- User profile service

- Content catalog service

- Streaming session manager

- Analytics service

This architecture ensures that each service can scale independently based on system demand.

Frontend — React Web Platform

The web application was rebuilt using React to deliver a responsive and modular user experience.

React enables:

- Faster UI rendering

- Component-based development

- Improved state management

- Seamless integration with streaming APIs

These advantages significantly improved the responsiveness and stability of the web platform.

Mobile Applications — Flutter

For mobile applications, we implemented a cross-platform development approach using Flutter.

Flutter enables:

- A single shared codebase for Android and iOS

- Near-native performance

- Faster development cycles

- Consistent UI across platforms

For streaming platforms, Flutter’s rendering performance helps maintain smooth playback even on mid-range mobile devices.

Video Processing — FFmpeg + AWS Media Services

The video processing pipeline combined FFmpeg with AWS Media Services to provide a flexible and scalable encoding infrastructure.

This pipeline supports:

- High-quality video encoding

- Adaptive bitrate generation

- Efficient HLS packaging for streaming

AWS MediaLive and MediaConvert provide robust infrastructure for managing both live and on-demand video workflows.

Data Layer — MongoDB

MongoDB was selected as the primary data store due to its flexible document-based architecture.

This structure allows the platform to efficiently manage dynamic metadata including:

- Content catalog data

- Channel schedules

- Playback history

- User preferences

MongoDB’s scalability and schema flexibility enable rapid iteration as the platform evolves.

Streaming Distribution — AWS CloudFront

To deliver video globally, the platform relies on AWS CloudFront CDN.

CloudFront provides:

- Global edge locations

- Intelligent caching

- Low-latency video delivery

- Lost-efficient bandwidth distribution

Through careful cache configuration, the platform significantly reduced origin traffic while improving delivery speed.

Authentication — JWT Secure Streaming Tokens

Subscription-based streaming platforms require strong content protection.

We implemented a security layer combining JWT authentication with secure streaming tokens. This prevents unauthorized access and protects against stream sharing and piracy.

Monitoring — Prometheus and Grafana

To enable full system observability, we deployed a monitoring framework using Prometheus and Grafana.

This system tracks:

- service performance

- infrastructure health

- video delivery metrics

- error rates across microservices

Custom dashboards allow engineers to monitor platform health in real time.

7. OTT Platform Architecture

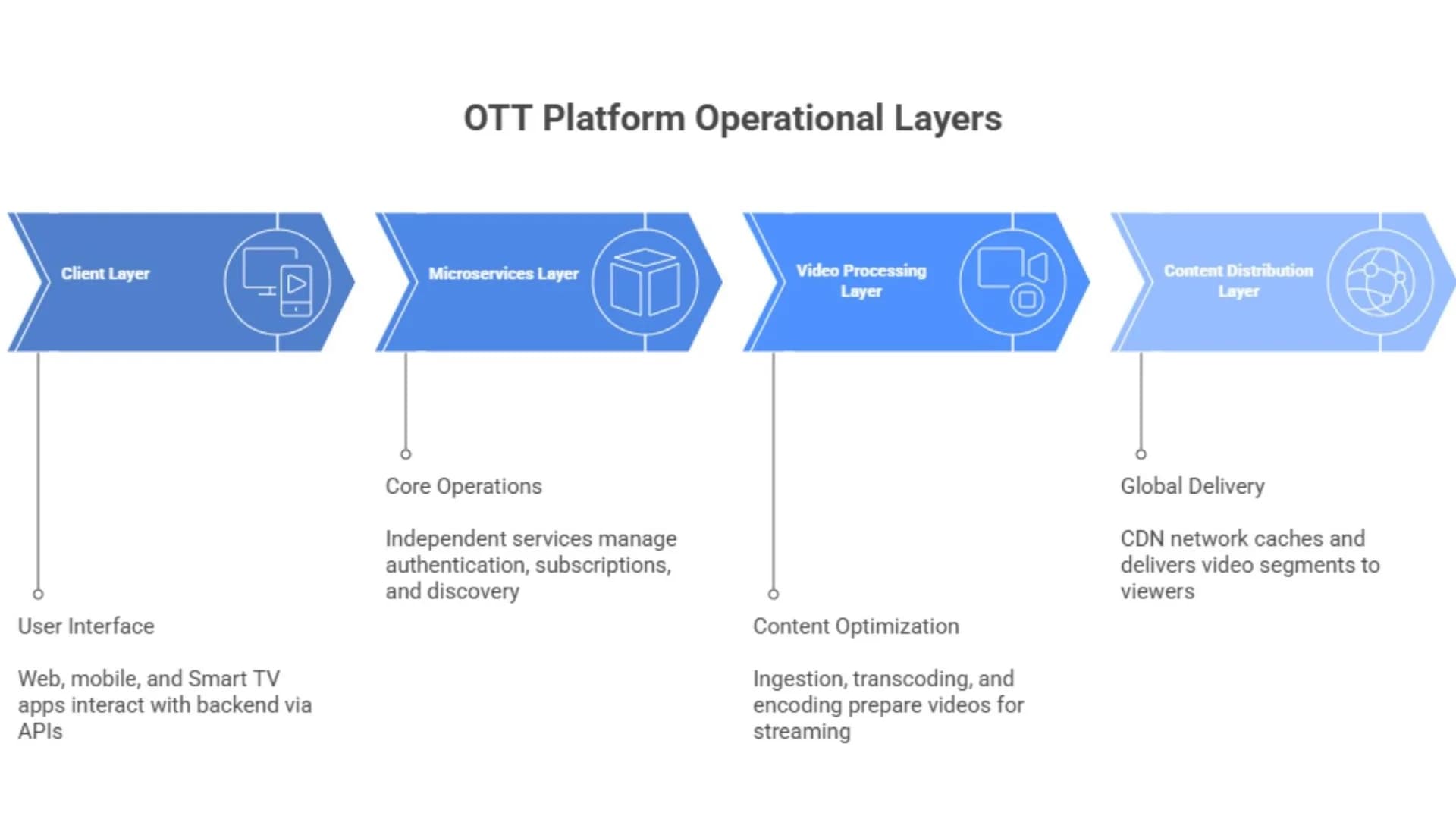

The final architecture consists of four operational layers.

- Client Layer: User-facing platforms include web applications built with React, mobile applications developed in Flutter, and integrations with Smart TV devices.These clients communicate with backend services through API gateways.

- Microservices Layer: The microservices layer manages core platform operations including authentication, subscription validation, content discovery, and streaming authorization.Because services operate independently, traffic spikes affecting one component do not impact the entire system.

- Video Processing Layer: This layer handles video ingestion, transcoding, adaptive bitrate encoding, and HLS segment generation.Both live and on-demand content are processed into optimized streaming formats.

- Content Distribution Layer: The final layer distributes video content through a global CDN network.Edge caching ensures that viewers receive video segments from the nearest location, reducing latency and buffering.

PART 3 — Business Impact & Future

Performance Improvements

After deploying the new architecture, the platform’s performance improved dramatically.

Stream startup time, previously one of the most common user complaints, was reduced to under two seconds. Optimized HLS segmentation and improved CDN distribution enabled faster playback initialization across both web and mobile platforms.

Buffering issues were also significantly reduced. Through adaptive bitrate streaming and optimized edge caching, buffering incidents decreased by more than 80%.

Perhaps the most significant improvement was the platform’s ability to handle over 500,000 concurrent viewers during major live events without service disruption.

Platform reliability also improved substantially. With microservices architecture, auto-scaling infrastructure, and real-time monitoring, the system achieved 99.98% uptime across multiple regions.

Business Impact

The architectural improvements produced measurable business results.

First, subscriber retention improved. When streaming performance stabilized, viewers spent more time on the platform and cancellation rates declined.

Second, the platform experienced 40% growth in subscribers within months of launching the new infrastructure. Improved user experience and positive app store reviews accelerated customer acquisition.

Third, operational costs related to content delivery decreased. By optimizing CDN caching strategies, the platform reduced origin server traffic and lowered bandwidth costs.

Most importantly, the platform regained the ability to confidently host large-scale live events, which became major drivers of engagement and revenue.

Client Testimonial

"Our platform was growing faster than our infrastructure could support. Live events became a constant risk, and buffering issues were damaging our brand. The Boffin Coders team completely redesigned our streaming architecture. Today we handle hundreds of thousands of concurrent viewers with consistent performance."

— CTO, IPTV Platform

Future Scalability — Preparing for 500K+ Concurrent Users

The platform was designed not only to solve current challenges but also to support future expansion.

The microservices architecture allows the system to scale horizontally as demand increases. Additional backend services can be deployed automatically while CDN infrastructure expands globally.

Future enhancements may include:

- Ultra-low latency streaming

- AI-driven content recommendations

- Personalized video quality optimization

- Expanded global edge delivery

With this foundation in place, the platform is positioned to scale beyond 1 million concurrent viewers without requiring another architectural transformation.

In the rapidly evolving OTT market, scalability is not simply a technical advantage—it is the foundation for long-term growth and competitive differentiation.

Ready to Build Something

That Actually Works?

Stop patching legacy code. Let's engineer a platform that scales with your ambition.